Less Prompting, More Operating

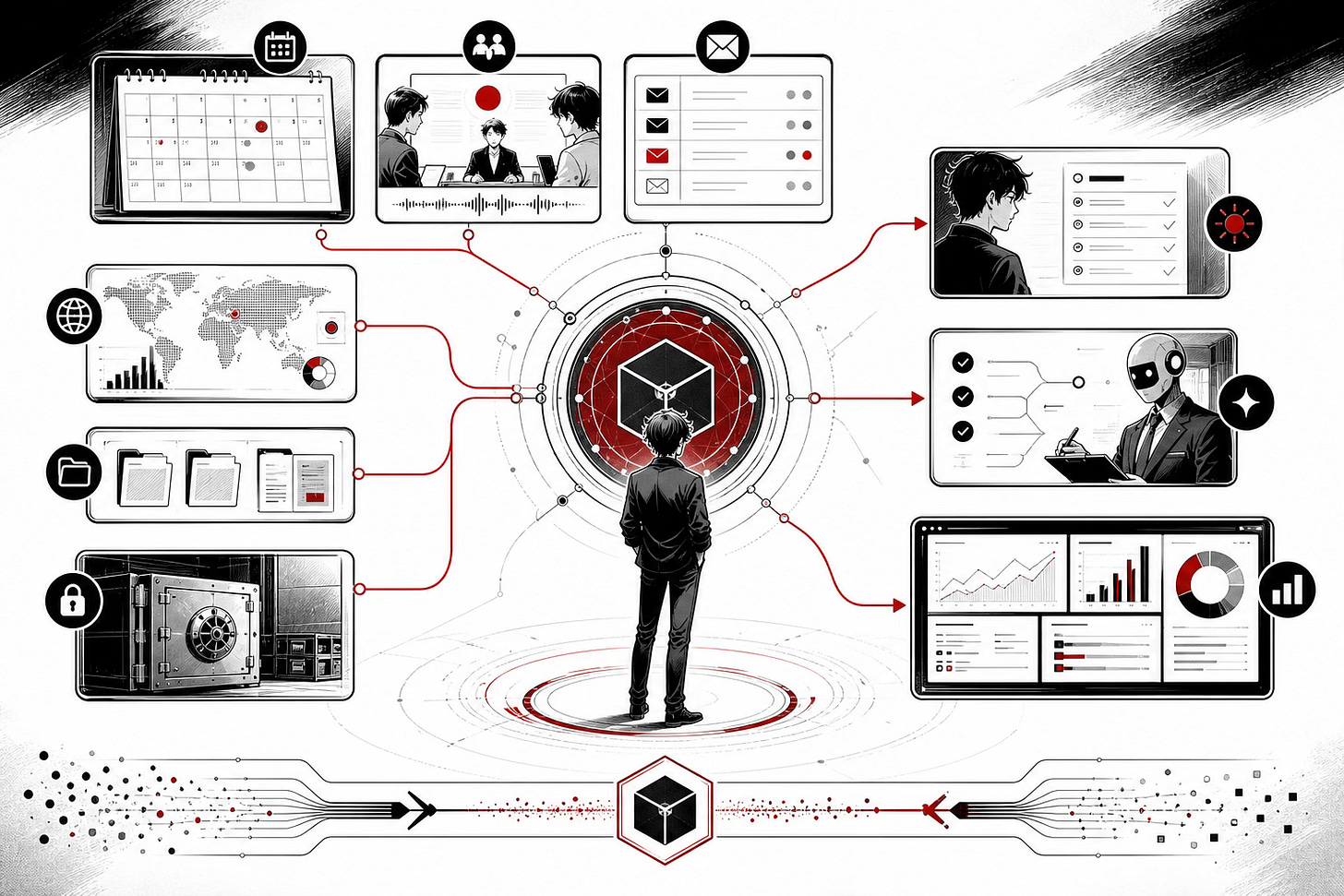

What it looks like to run your work like an AI-native company of one: a morning standup, a chief of staff, and a half-built cockpit.

I’ve spent a lot of the last month talking to asset managers and investment teams, including a growing number of conversations at the management level. The pattern is consistent: a wide spectrum of AI usage across users and firms, and people increasingly trying to work out whether they’re keeping up or already falling behind.

Most of those conversations focus on prompting. Better prompts, prompt libraries, prompt courses, single-shot chats with smarter models. That still matters, and for plenty of people sharpening their prompting is exactly the right next step.

For where I am now, the bigger unlock has been somewhere else. I’ve been pushing my own usage and have moved on from prompting as the main thing to work on. I’ve been running my work like an AI-native company of one: a morning standup that lands before I’ve made tea, a chief-of-staff skill that tells me what to do next, and a half-built cockpit for watching it all run. Less prompting, more operating. Here’s what that actually looks like.

The mindset shift

I wrapped up a consulting engagement in early April and used the breathing room to address something that had been bothering me for months. Every time I opened a Claude session it was like a smart new joiner with no memory of anything we’d done together. Every time I closed it, the context evaporated, and I’d then spend the first ten minutes of the next session catching it up.

That’s not a prompting problem. That’s an operating model problem.

A company doesn’t work that way. A company has departments, recurring processes, an institutional memory, a chief of staff, and an exec view of what’s running where. It runs whether the founder is watching or not. Most people, including me until recently, run their AI work like a sole trader with a chatbot. How do you move beyond this?

I covered the underlying theory in Context Compounds, where I argued that context management is what moves AI from single prompts to something more durable. This issue is what one-person orchestration actually looks like in practice, once it’s been set up.

How I got here

Most of my heavy general use has run through ChatGPT, which over time accumulated a reasonable picture of my life across family, volunteer work, consulting, the newsletter, and investing. Claude was reserved for more specialised work: this newsletter, coding, deeper research. Useful, but the context was fragmented across tools, and neither side knew what the other was doing.

The first move was building a personal context database that any AI tool could read. Plain markdown, sat in my Obsidian vault, structured by area. Once that was set up, I started feeding things in: meeting notes from Granola, my calendar across three accounts, project files. A new meeting goes in, the context database updates automatically, and the next session starts already knowing who I just spoke to and what we agreed.

From there it expanded into projects and tasks, then into research. I’ve been adapting Karpathy’s LLM wiki framework to ingest articles I’ve read and, more recently, stock research and earnings notes. The idea is that AI has access to everything I know, not just what I happen to type into a prompt.

It took a few weeks of using this before it stopped feeling like admin and started feeling like something else. The model knows in detail what I’m working on and is up to date.

A morning, concretely

The easiest way to show what changes is to walk through a single morning.

At 07:20 on a weekday, before I’ve made tea, I get a Telegram message. It’s a written standup from the company to me: today’s calendar across three accounts, what ran overnight, what’s pending from yesterday, suggested actions for today.

A typical entry reads something like this:

Overnight: market-close research surfaced NOW down 17.7% post-results, OR up 9% on Q1 beat. Gmail digest flagged a research bear case on a held position worth triangulating.

Suggested actions: prep talking points for the 10:30; review research note; finish draft emails.

The pieces underneath have been quietly running while I slept. Market-close research scanned my watchlist at 4pm yesterday. A Gmail digest filtered roughly a hundred messages down to the thirty-eight that actually matter. Both feed the morning brief.

I used to get a version of this from sell-side when I was a portfolio manager at UBS. The version I run now is narrower, better-targeted, built around my own book, and costs me a Claude subscription. Issue 23 had a line about a “junior analyst running in parallel for less than the cost of a Bloomberg terminal.” This is that junior, deployed inside the firm.

Memory and a chief of staff

The morning brief was the easy part. The harder one was within-session: how to stop the model forgetting everything as soon as I close the window.

I built a small skill called /save-session. I invoke it when I’ve finished a piece of work, and it summarises what I’ve done since the last save and writes it into a folder. Over time that folder has become a searchable database of text files, project by project, day by day. An AI agent searching that folder is reading institutional memory, not guessing.

That’s what makes the next piece work. I run a custom skill called /propose-tasks inside any project folder. It reads the charter, the recent activity log, files I’ve touched in the last week, meeting notes from the last fortnight, and the previous session summaries. Then it gives me five to eight candidate actions, ranked, each grounded in a specific observation rather than a generic to-do.

So instead of “follow up on outreach,” I get “activity log 2026-04-21 says zip sent to Bob, confirm delivery acknowledged” or, less comfortably: “you haven’t followed up with that hedge fund on the proposal from two weeks ago.” I pick by number, and a fresh subagent runs the task.

That’s the chief-of-staff function. It isn’t asking what I should do in the abstract; it’s asking what I should do given everything the company has been working on this week. I’m half-jokingly starting to think AI will end up directing me rather than the other way around.

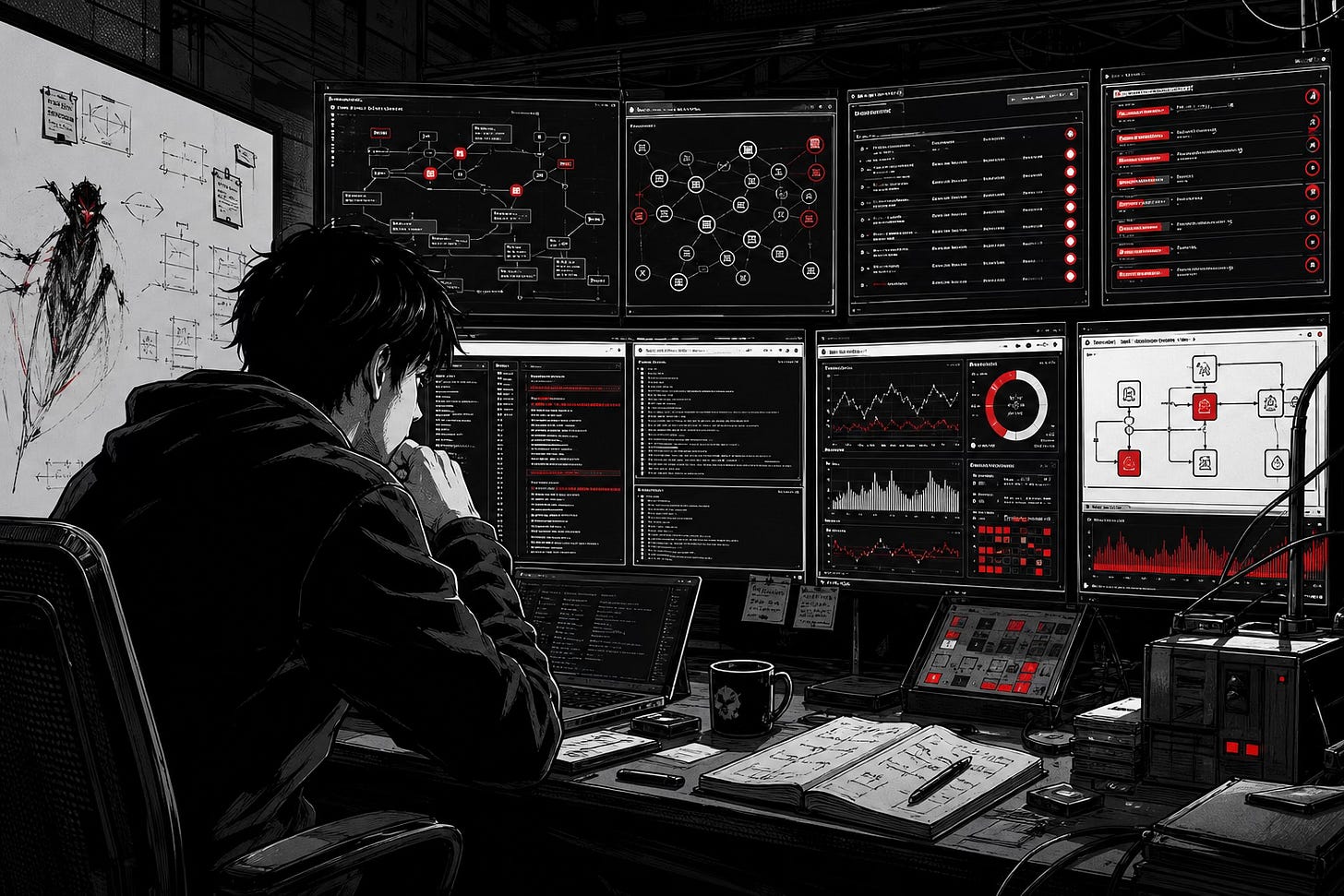

The cockpit problem

Once you have several agents and processes running, the harder problem is control: what’s running, where I am with each one, what’s stuck waiting on me. I’ve cobbled together a working setup in tmux and iTerm2 with a Streamlit dashboard on top, still half-built. The broader point is that nobody has shipped the right UI for this yet. Most “agent platforms” still assume one agent at a time, but the reality once you run several is closer to a trading desk. Worth building the cockpit yourself while the category figures itself out.

The honest limitation

A confession. Most of the writing about agents talks about handing them long-running tasks and going to bed. I haven’t got comfortable with that, and I’m not sure I will.

I’d rather be in the loop. I want to see what the agent is doing, sanity-check it, redirect when it goes the wrong way. Maybe I’m a micromanager when it comes to AI. But the cost of a wrong long-running run, especially when it touches investment work or client material, is high enough that I’d rather take the small productivity hit and stay engaged.

That tension is going to be the real adoption question for institutional users. Not “can AI do this,” but “how comfortable am I being out of the room when it does.”

What’s coming next

A few threads that will turn into future issues. I’ve been quietly automating the less glamorous parts of running my concentrated 25-30 stock long-only portfolio: performance reporting that used to eat real time at UBS, a rebalancing tool that incorporates qualitative judgement instead of overriding it, and an earnings-update process that drafts notes as companies print. The interesting bit is wiring earnings into the rebalancer so the post-earnings view feeds position sizing. More on each soon.

Why it matters

The edge isn’t a better prompt. It’s AI plumbed into an operating model that runs whether you’re watching or not.

Two things sit underneath everything above. The first is context engineering: the information architecture that lets the model start each session knowing what the company already knows. The second is the harness: the schedulers, hooks, and connectors that turn one-shot chats into a system that runs on its own. Neither shows up in prompt-engineering threads, and that’s the point.

Every meeting note, session log, digest, and brief compounds. A Claude session that knows what I worked on yesterday, who I’m meeting today, which positions I hold, and what’s pending is a fundamentally different tool from a chat window with no memory. The shift is not that the model is smarter. The shift is that the company around the model is real.

Everything sits in plain markdown, which means it’s portable across tools and likely to outlast any single product. That matters more than it sounds, given how quickly this stack is moving.

This is what I think the next year of AI adoption looks like for serious individual operators. Less prompting, more operating. There’s no finishing line and this isn’t a race. What matters is making progress, whether that’s moving from once-a-week to a daily habit or pushing into agent orchestration. The mindset move is the bit that takes longest. Once you stop treating your work as a single user with a chatbot and start treating it as a small AI-native company, the rest tends to fall into place.

I helped a small asset manager last month with a single-stock monitoring build that would have taken a couple of weeks of analyst time, completed inside a day. Another approached me this week feeling behind. Both conversations are part of why I’m writing this issue: the gap between firms that have started this shift and firms that haven’t is widening fast.

If you’re a head of research or running an investment team and want to talk through where to start, reply to this email or message me on LinkedIn. I run a small number of 30-minute intro calls each month, no pitch. Both conversations above started exactly that way.

— Jeremy